Poster Session 1 · Wednesday, December 3, 2025 11:00 AM → 2:00 PM

#5000

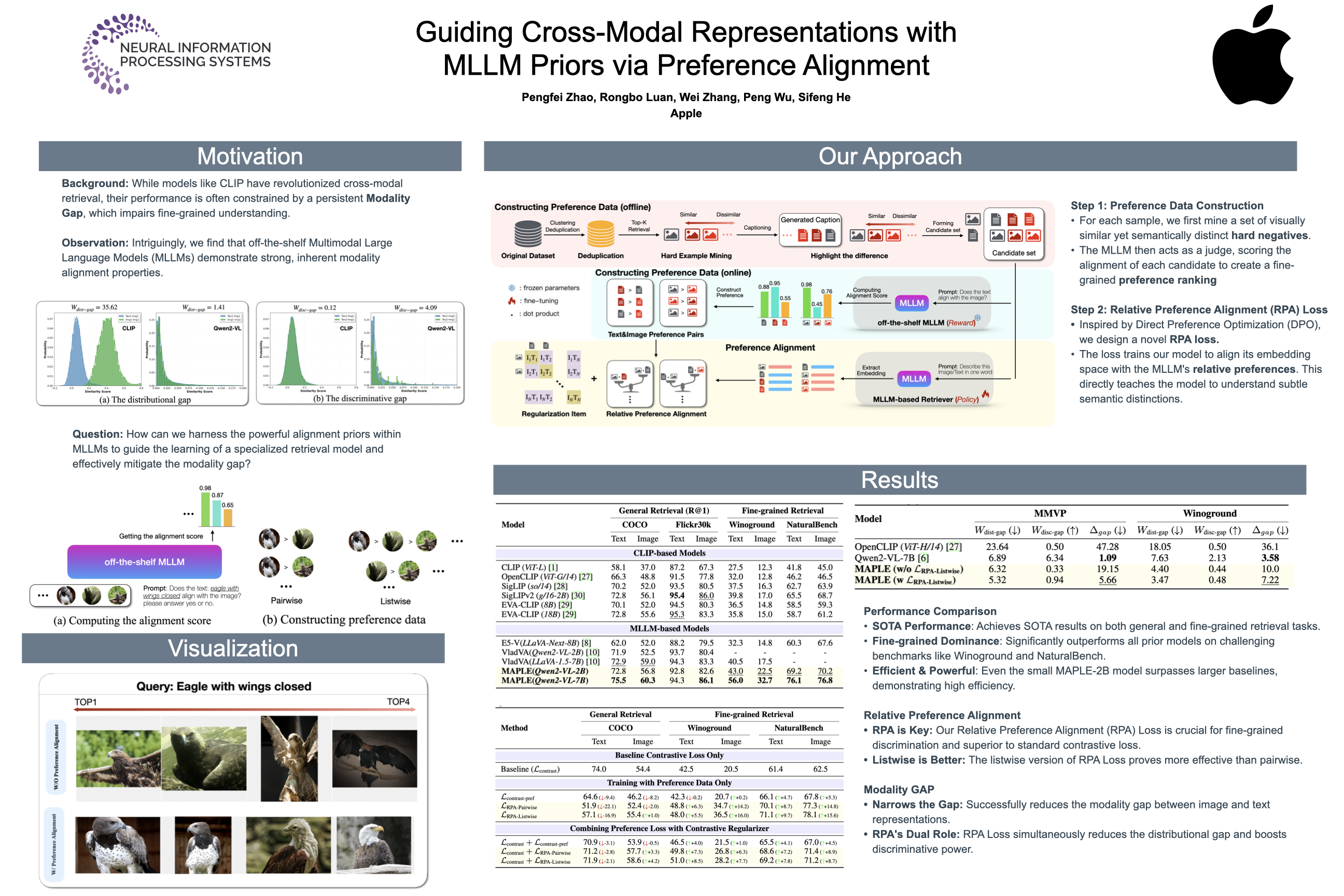

Guiding Cross-Modal Representations with MLLM Priors via Preference Alignment

Abstract

Despite Contrastive Language–Image Pre-training (CLIP)'s remarkable capability to retrieve content across modalities, a substantial modality gap persists in its feature space.

Intriguingly, we discover that off-the-shelf MLLMs (Multimodal Large Language Models) demonstrate powerful inherent modality alignment properties. While recent MLLM-based retrievers with unified architectures partially mitigate this gap, their reliance on coarse modality alignment mechanisms fundamentally limits their potential.

In this work, We introduce MAPLE (Modality-Aligned Preference Learning for Embeddings), a novel framework that leverages the fine-grained alignment priors inherent in MLLM to guide cross-modal representation learning. MAPLE formulates the learning process as reinforcement learning with two key components:

- Automatic preference data construction using off-the-shelf MLLM, and

- a new Relative Preference Alignment (RPA) loss, which adapts Direct Preference Optimization (DPO) to the embedding learning setting.

Experimental results show that our preference-guided alignment achieves substantial gains in fine-grained cross-modal retrieval, underscoring its effectiveness in handling nuanced semantic distinctions.