Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#1511

Optimizing Distributional Geometry Alignment with Optimal Transport for Generative Dataset Distillation

Abstract

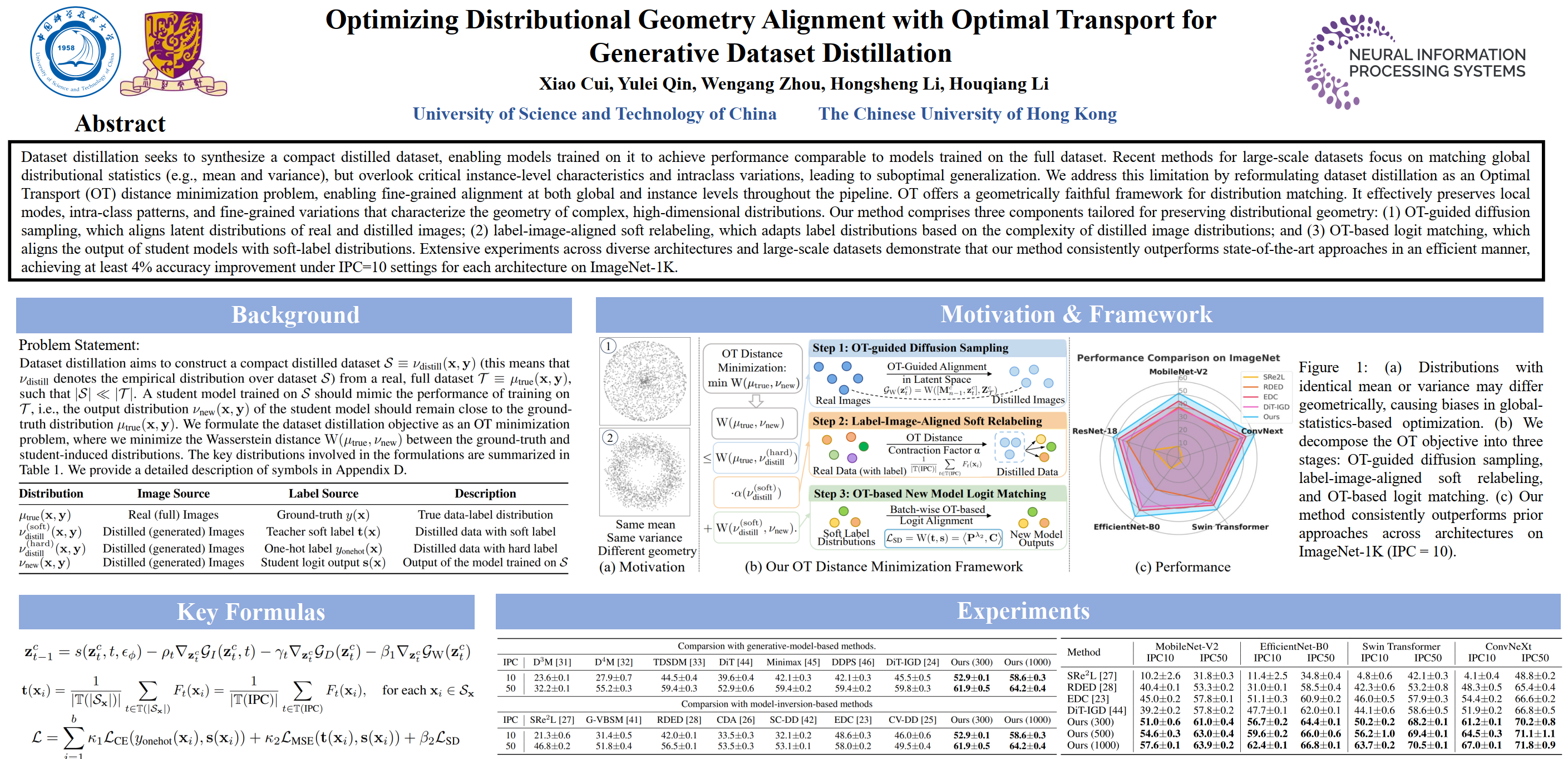

Dataset distillation seeks to synthesize a compact distilled dataset, enabling models trained on it to achieve performance comparable to models trained on the full dataset. Recent methods for large-scale datasets focus on matching global distributional statistics (e.g., mean and variance), but overlook critical instance-level characteristics and intraclass variations, leading to suboptimal generalization.

We address this limitation by reformulating dataset distillation as an Optimal Transport (OT) distance minimization problem, enabling fine-grained alignment at both global and instance levels throughout the pipeline. OT offers a geometrically faithful framework for distribution matching. It effectively preserves local modes, intra-class patterns, and fine-grained variations that characterize the geometry of complex, high-dimensional distributions.

Our method comprises three components tailored for preserving distributional geometry:

- OT-guided diffusion sampling, which aligns latent distributions of real and distilled images;

- label-image-aligned soft relabeling, which adapts label distributions based on the complexity of distilled image distributions; and

- OT-based logit matching, which aligns the output of student models with soft-label distributions.

Extensive experiments across diverse architectures and large-scale datasets demonstrate that our method consistently outperforms state-of-the-art approaches in an efficient manner, achieving at least 4% accuracy improvement under IPC=10 settings for each architecture on ImageNet-1K.