Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#3105

On the Stability and Generalization of Meta-Learning: the Impact of Inner-Levels

Abstract

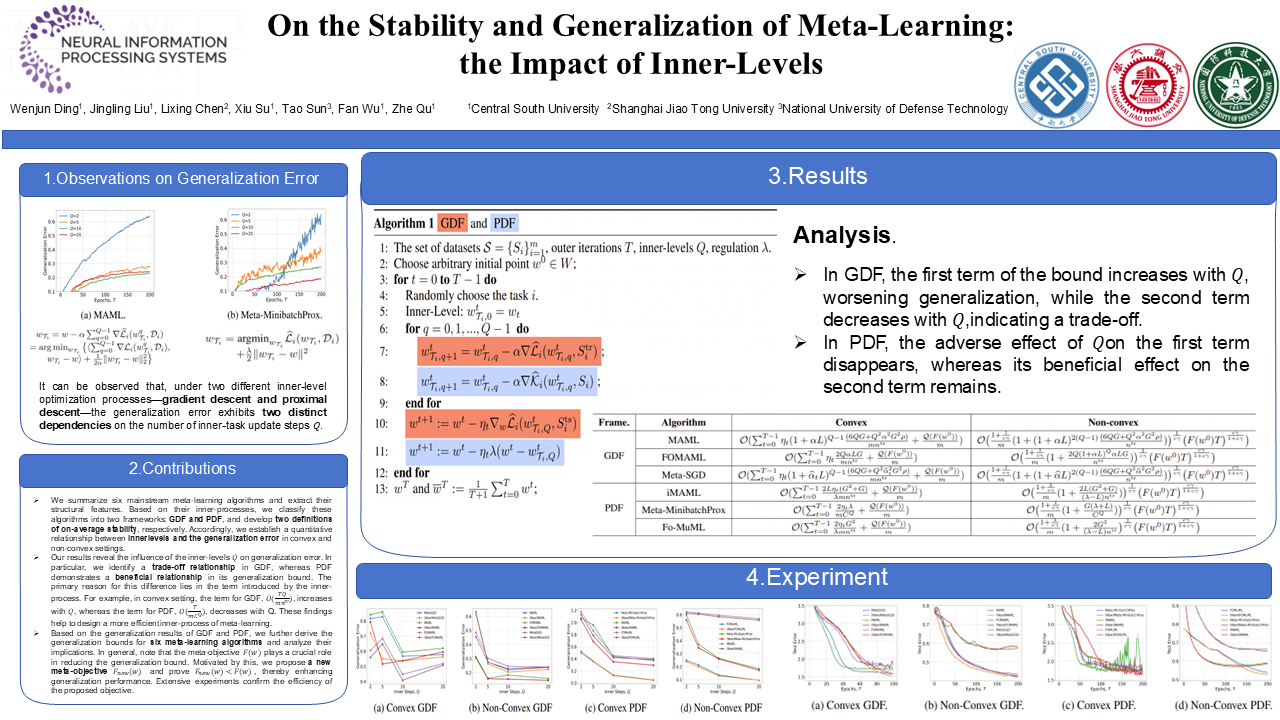

Meta-learning has achieved significant advancements, with generalization emerging as a key metric for evaluating meta-learning algorithms. While recent studies have mainly focused on training strategies, data-split methods, and tightening generalization bounds, they often ignore the impact of inner-levels on generalization. To bridge this gap, this paper focuses on several prominent meta-learning algorithms and establishes two generalization analytical frameworks for them based on their inner-processes: the Gradient Descent Framework (GDF) and the Proximal Descent Framework (PDF).

Within these frameworks, we introduce two novel algorithmic stability definitions and derive the corresponding generalization bounds. Our findings reveal a trade-off of inner-levels under GDF, whereas PDF exhibits a beneficial relationship. Moreover, we highlight the critical role of the meta-objective function in minimizing generalization error.

Inspired by this, we propose a new, simplified meta-objective function definition to enhance generalization performance. Many real-world experiments support our findings and show the improvement of the new meta-objective function.