Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#3716

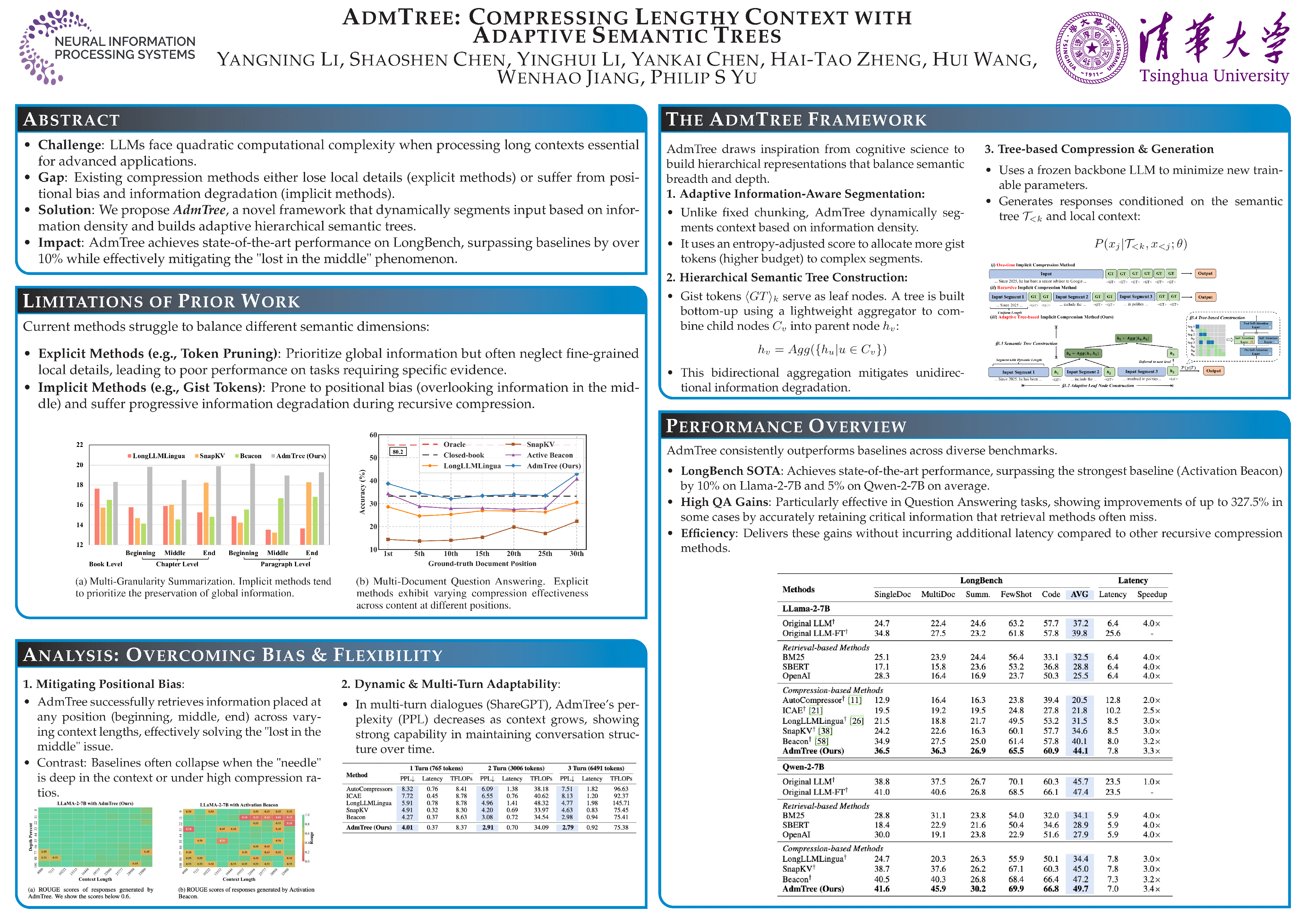

AdmTree: Compressing Lengthy Context with Adaptive Semantic Trees

Yangning Li, Shaoshen Chen, Yinghui Li, Yankai Chen, Hai-Tao Zheng, Hui Wang, Wenhao Jiang, Philip S. Yu

Abstract

The quadratic complexity of self-attention limits Large Language Models (LLMs) in processing long contexts, a capability vital for many advanced applications. Context compression aims to mitigate this computational barrier while preserving essential semantic information. However, existing methods often falter: explicit methods can sacrifice local detail, while implicit ones may exhibit positional biases, struggle with information degradation, or fail to capture long-range semantic dependencies.

We introduce AdmTree, a novel framework for adaptive, hierarchical context compression designed with a core focus on maintaining high semantic fidelity while keep efficiency. AdmTree dynamically segments input based on information density, employing gist tokens to summarize variable-length segments as leaves in a semantic binary tree.

This structure, combined with a lightweight aggregation mechanism and a frozen backbone LLM (minimizing new trainable parameters), enables efficient hierarchical abstraction of the context.

By effectively preserving fine-grained details alongside global semantic coherence, mitigating position bias, and adapting dynamically to content, AdmTree comprehensively preserves the semantic information of lengthy context.