Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#4206

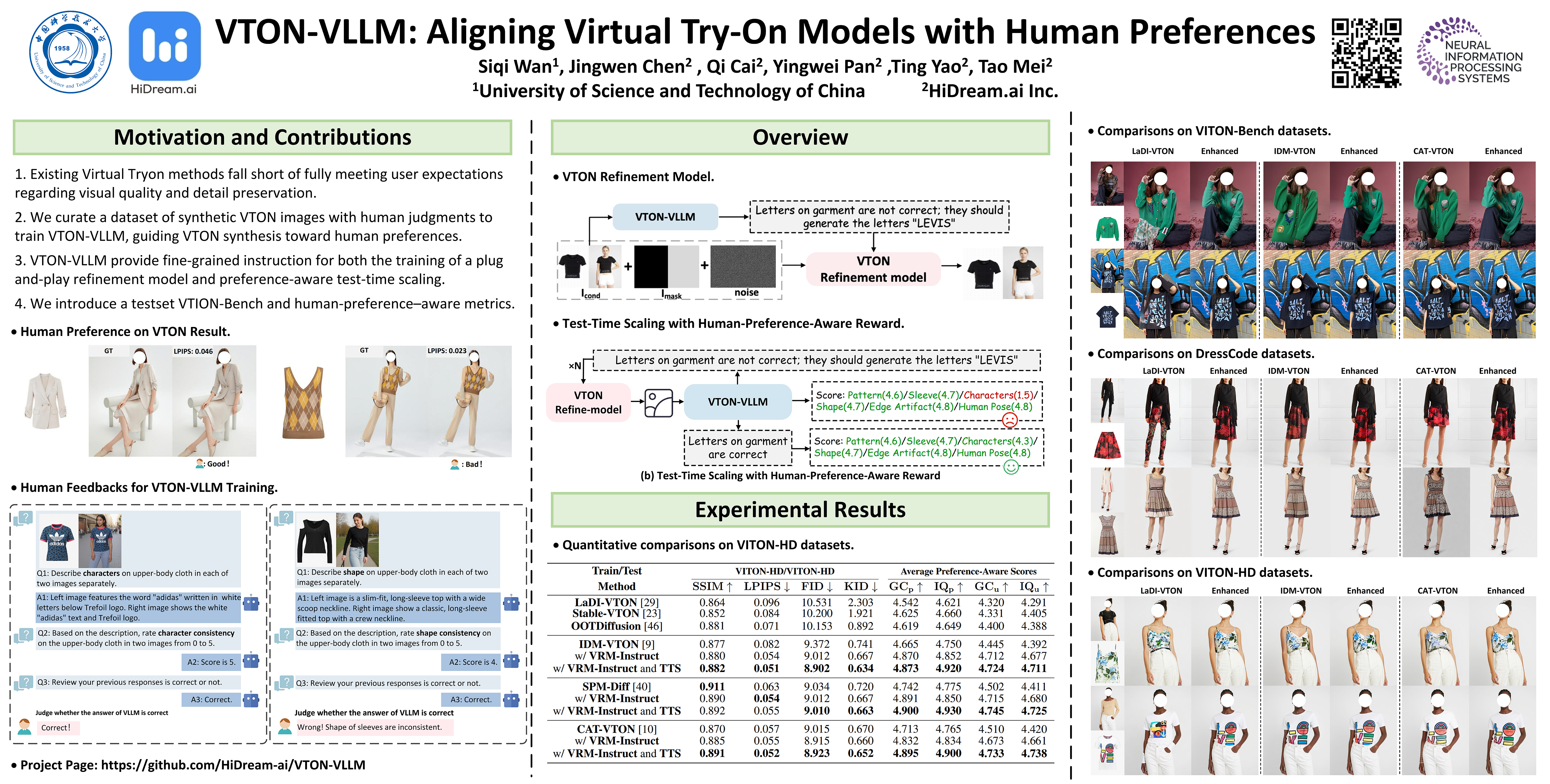

VTON-VLLM: Aligning Virtual Try-On Models with Human Preferences

Abstract

Diffusion models have yielded remarkable success in virtual try-on (VTON) task, yet they often fall short of fully meeting user expectations regarding visual quality and detail preservation.

To alleviate this issue, we curate a dataset of synthesized VTON images annotated with human judgments across multiple perceptual criteria. A vision large language model (VLLM), namely VTON-VLLM, is then learnt on these annotations. VTON-VLLM functions as a unified "fashion expert" and is capable of both evaluating and steering VTON synthesis towards human preferences.

Technically, beyond serving as an automatic VTON evaluator, VTON-VLLM upgrades VTON model through two pivotal ways:

- providing fine-grained supervisory signals during the training of a plug-and-play VTON refinement model, and

- enabling adaptive and preference-aware test-time scaling at inference.

To benchmark VTON models more holistically, we introduce VITON-Bench, a challenging test suite of complex try-on scenarios, and human-preference–aware metrics. Extensive experiments demonstrate that powering VTON models with our VTON-VLLM markedly enhances alignment with human preferences. Code is publicly available at: https://github.com/HiDream-ai/VTON-VLLM/.