Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#509

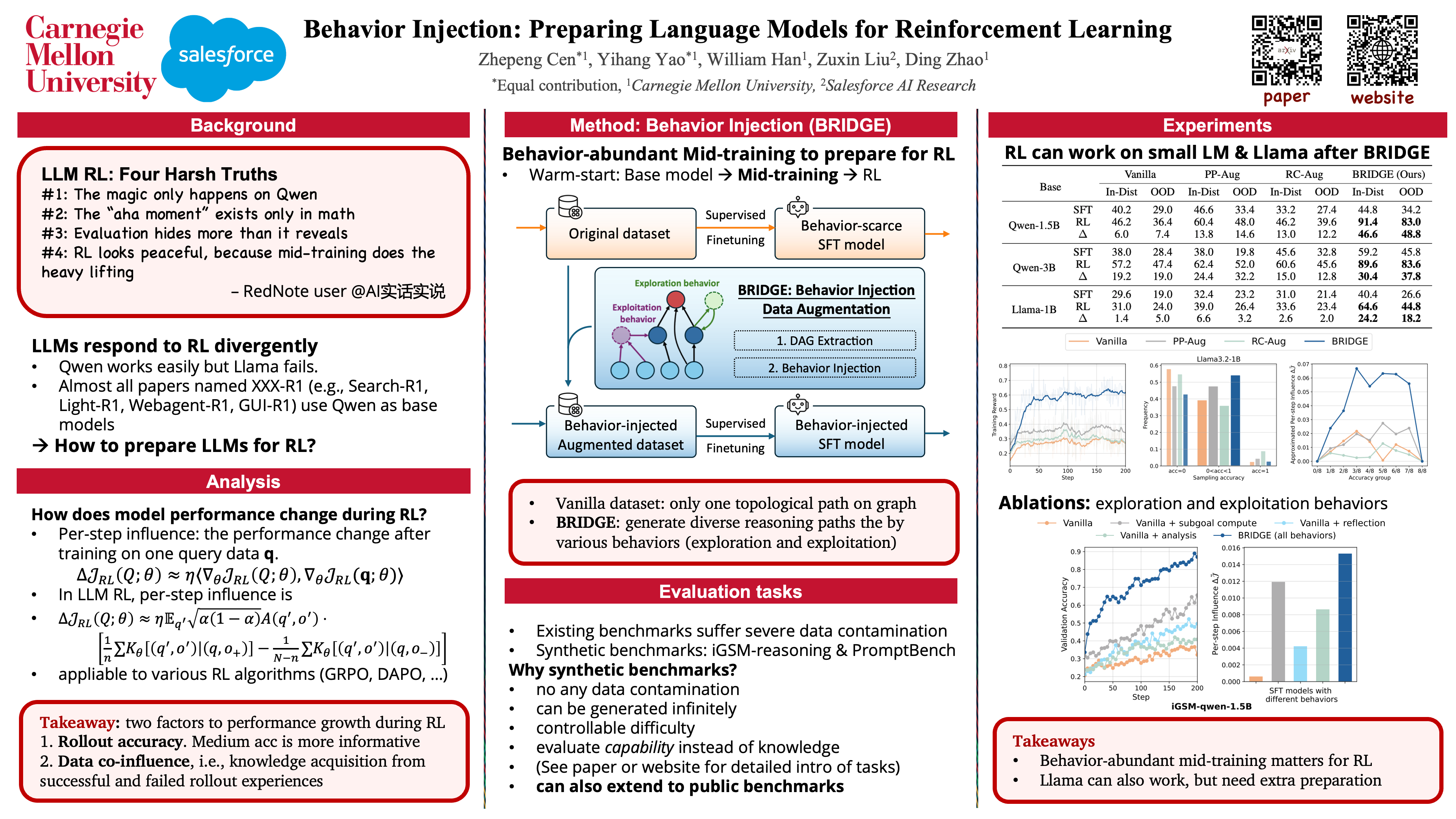

Behavior Injection: Preparing Language Models for Reinforcement Learning

Abstract

Reinforcement learning (RL) has emerged as a powerful post-training technique to incentivize the reasoning ability of large language models (LLMs). However, LLMs can respond very inconsistently to RL finetuning: some show substantial performance gains, while others plateau or even degrade.

To understand this divergence, we analyze the per-step influence of the RL objective and identify two key conditions for effective post-training:

- RL-informative rollout accuracy, and

- strong data co-influence, which quantifies how much the training data affects performance on other samples.

Guided by these insights, we propose behavior injection, a task-agnostic data augmentation scheme applied prior to RL. Behavior injection enriches the supervised finetuning (SFT) data by seeding exploratory and exploitative behaviors, effectively making the model more RL-ready.

We evaluate our method across two reasoning benchmarks with multiple base models. The results demonstrate that our theoretically motivated augmentation can significantly increase the performance gain from RL over the pre-RL model.