Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#2912

Data Selection Matters: Towards Robust Instruction Tuning of Large Multimodal Models

Abstract

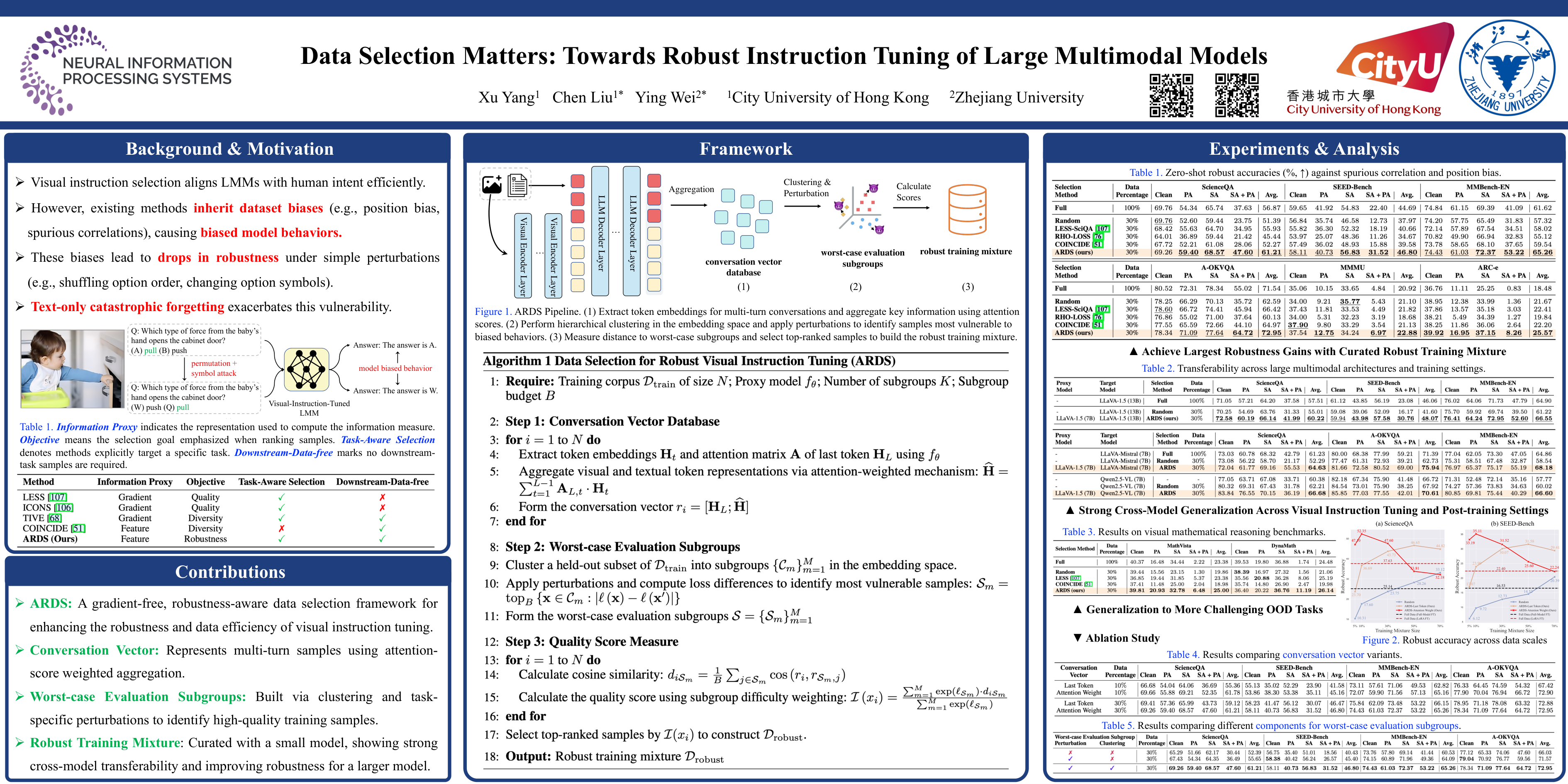

Selecting a compact subset of visual instruction–following data has emerged as an effective way to align large multimodal models with human intentions while avoiding the high cost of full-dataset training. Yet we observe that both full-data training and existing state-of-the-art data selection methods tend to inherit underlying dataset biases such as position bias and spurious correlations, leading to biased model behaviors.

To address this issue, we introduce ARDS, a robustness-aware targeted visual instruction-selection framework that explicitly mitigates these weaknesses, sidestepping the need for access to downstream data or time-consuming gradient computation.

Specifically, we first identify the worst-case evaluation subgroups through visual and textual task-specific perturbations. The robust training mixture is then constructed by prioritizing samples that are semantically closer to these subgroups in a rich multimodal embedding space.

Extensive experiments demonstrate that ARDS substantially boosts both robustness and data efficiency for visual instruction tuning. We also showcase that the robust mixtures produced with a smaller model transfer effectively to larger architectures. Our code and selected datasets that have been demonstrated transferable across models are available at https://github.com/xyang583/ARDS.