Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#812

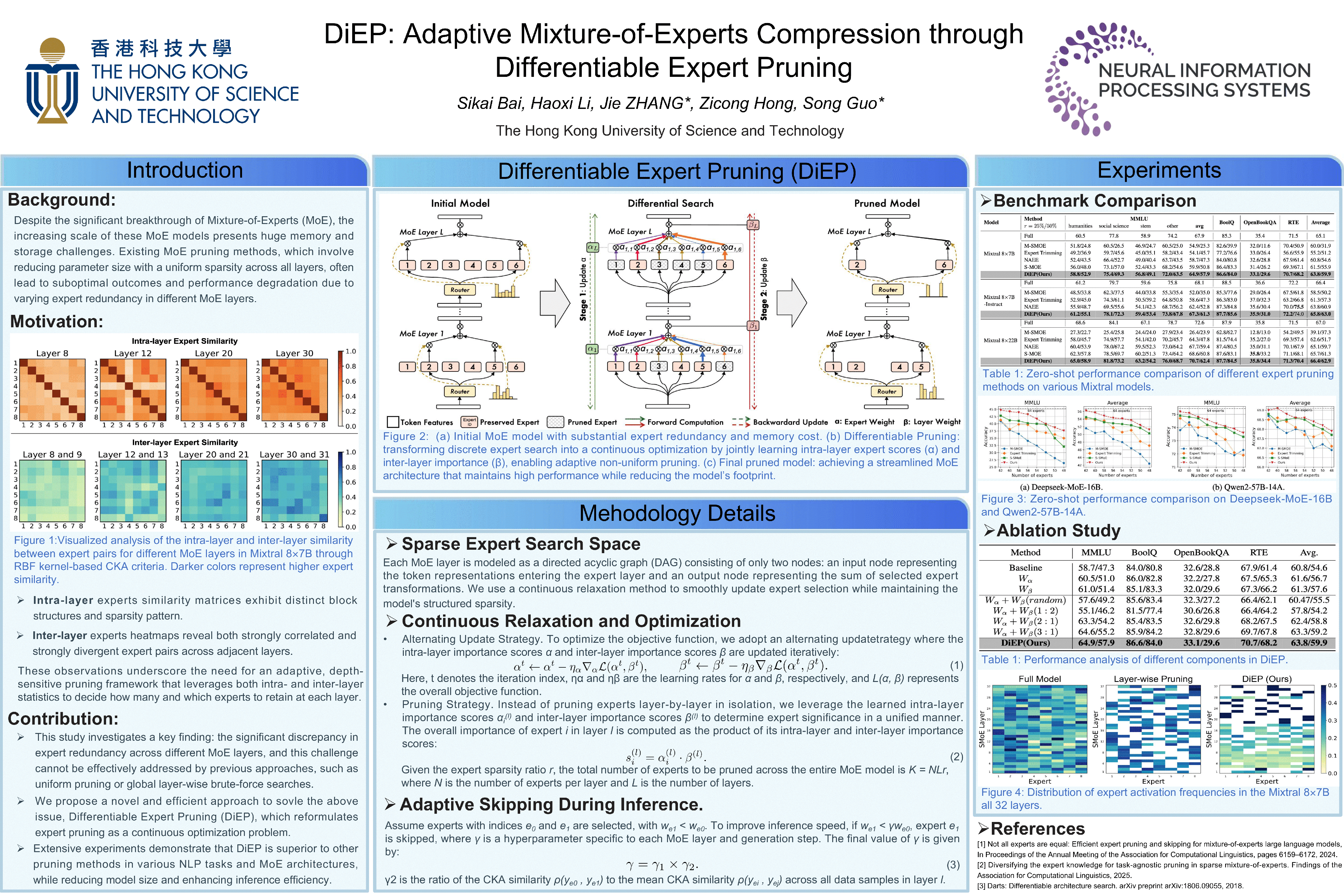

DiEP: Adaptive Mixture-of-Experts Compression through Differentiable Expert Pruning

Abstract

Despite the significant breakthrough of Mixture-of-Experts (MoE), the increasing scale of these MoE models presents huge memory and storage challenges. Existing MoE pruning methods, which involve reducing parameter size with a uniform sparsity across all layers, often lead to suboptimal outcomes and performance degradation due to varying expert redundancy in different MoE layers.

To address this, we propose a non-uniform pruning strategy, dubbed Differentiable Expert Pruning (DiEP), which adaptively adjusts pruning rates at the layer level while jointly learning inter-layer importance, effectively capturing the varying redundancy across different MoE layers. By transforming the global discrete search space into a continuous one, our method handles exponentially growing non-uniform expert combinations, enabling adaptive gradient-based pruning.

Extensive experiments on five advanced MoE models demonstrate the efficacy of our method across various NLP tasks. Notably, DiEP retains around 92% of original performance on Mixtral 87B with only half the experts, outperforming other pruning methods by up to 7.1% on the challenging MMLU dataset.