Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#5208

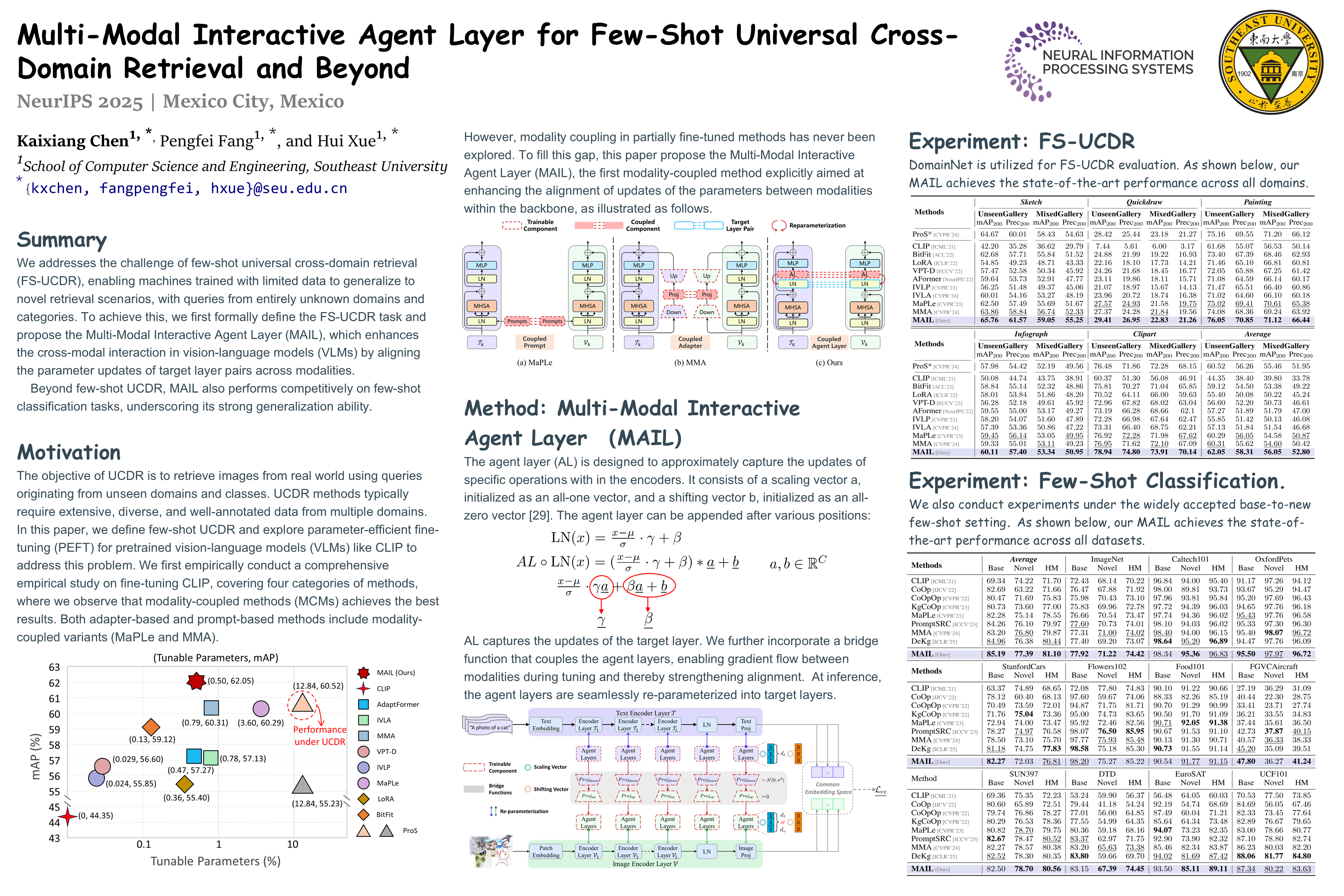

Multi-Modal Interactive Agent Layer for Few-Shot Universal Cross-Domain Retrieval and Beyond

Abstract

This paper firstly addresses the challenge of few-shot universal cross-domain retrieval (FS-UCDR), enabling machines trained with limited data to generalize to novel retrieval scenarios, with queries from entirely unknown domains and categories. To achieve this, we first formally define the FS-UCDR task and propose the Multi-Modal Interactive Agent Layer (MAIL), which enhances the cross-modal interaction in vision-language models (VLMs) by aligning the parameter updates of target layer pairs across modalities.

Specifically, MAIL freezes the selected target layer pair and introduces a trainable agent layer pair to approximate localized parameter updates. A bridge function is then introduced to couple the agent layer pair, enabling gradient communication across modalities to facilitate update alignment.

The proposed MAIL offers four key advantages:

- its cross-modal interaction mechanism improves knowledge acquisition from limited data, making it highly effective in low-data scenarios;

- during inference, MAIL integrates seamlessly into the VLM via reparameterization, preserving inference complexity;

- extensive experiments validate the superiority of MAIL, which achieves substantial performance gains over data-efficient UCDR methods while requiring significantly fewer training samples;

- beyond UCDR, MAIL also performs competitively on few-shot classification tasks, underscoring its strong generalization ability.

Code.