Poster Session 5 · Friday, December 5, 2025 11:00 AM → 2:00 PM

#5511

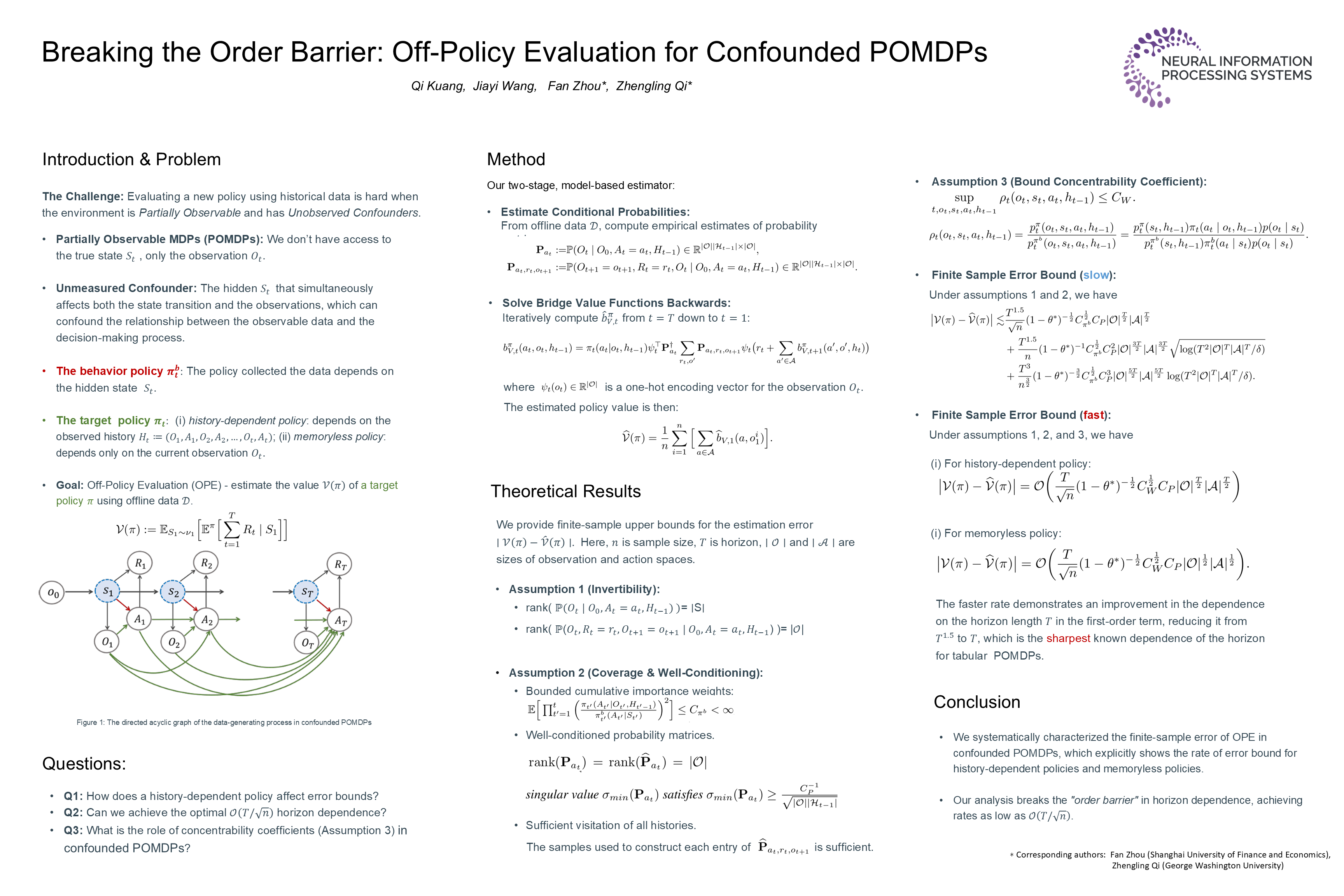

Breaking the Order Barrier: Off-Policy Evaluation for Confounded POMDPs

Abstract

We consider off-policy evaluation (OPE) in Partially Observable Markov Decision Processes (POMDPs) with unobserved confounding. Recent advances have introduced bridge-function to circumvent unmeasured confounding and develop estimators for the policy value, yet the statistical error bounds of them related to the length of horizon and the size of the state-action space remain largely unexplored.

In this paper, we systematically investigate the finite-sample error bounds of OPE estimators in finite-horizon tabular confounded POMDPs. Specifically, we show that under certain rank conditions, the estimation error for policy value can achieve a rate of , excluding the cardinality of the observation space and the action space . With an additional mild condition on the concentrability coefficients in confounded POMDPs, the rate of estimation error can be improved to .

We also show that for a fully history-dependent policy, the estimation error scales as , highlighting the exponential error dependence introduced by history-based proxies to infer hidden states. Furthermore, when the target policy is memoryless policy, the error bound improves to , which matches the optimal rate known for tabular MDPs.

To the best of our knowledge, this is the first work to provide a comprehensive finite-sample analysis of OPE in confounded POMDPs.