Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#3016

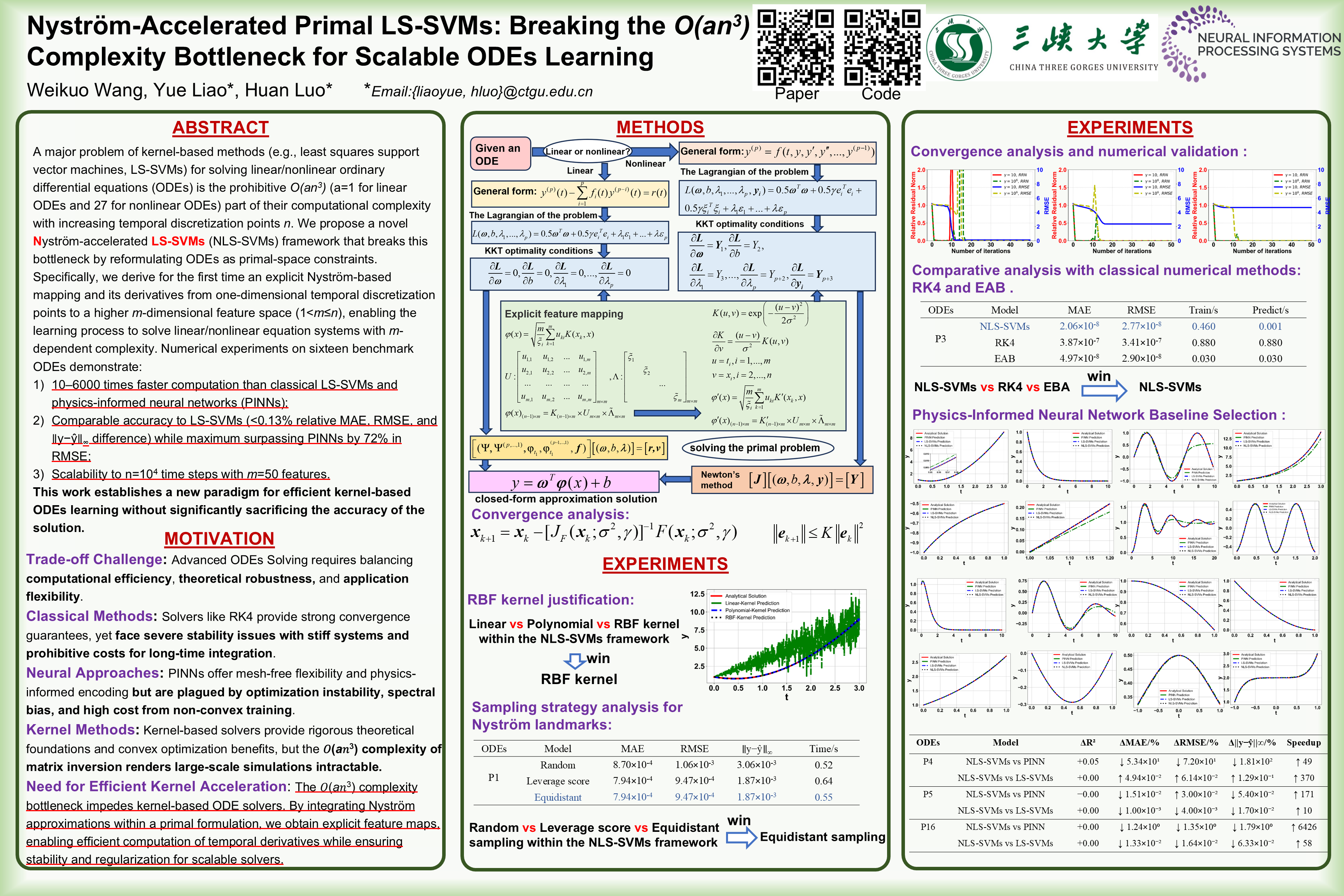

Nyström-Accelerated Primal LS-SVMs: Breaking the Complexity Bottleneck for Scalable ODEs Learning

Abstract

A major problem of kernel-based methods (e.g., least squares support vector machines, LS-SVMs) for solving linear/nonlinear ordinary differential equations (ODEs) is the prohibitive ( for linear ODEs and 27 for nonlinear ODEs) part of their computational complexity with increasing temporal discretization points .

We propose a novel Nyström-accelerated LS-SVMs framework that breaks this bottleneck by reformulating ODEs as primal-space constraints. Specifically, we derive for the first time an explicit Nyström-based mapping and its derivatives from one-dimensional temporal discretization points to a higher -dimensional feature space (), enabling the learning process to solve linear/nonlinear equation systems with -dependent complexity.

Numerical experiments on sixteen benchmark ODEs demonstrate:

- times faster computation than classical LS-SVMs and physics-informed neural networks (PINNs)

- comparable accuracy to LS-SVMs ( relative MAE, RMSE, and difference) while maximum surpassing PINNs by 72\% in RMSE

- scalability to time steps with features.

This work establishes a new paradigm for efficient kernel-based ODEs learning without significantly sacrificing the accuracy of the solution.