Poster Session 1 · Wednesday, December 3, 2025 11:00 AM → 2:00 PM

#1905

SimulMEGA: MoE Routers are Advanced Policy Makers for Simultaneous Speech Translation

Abstract

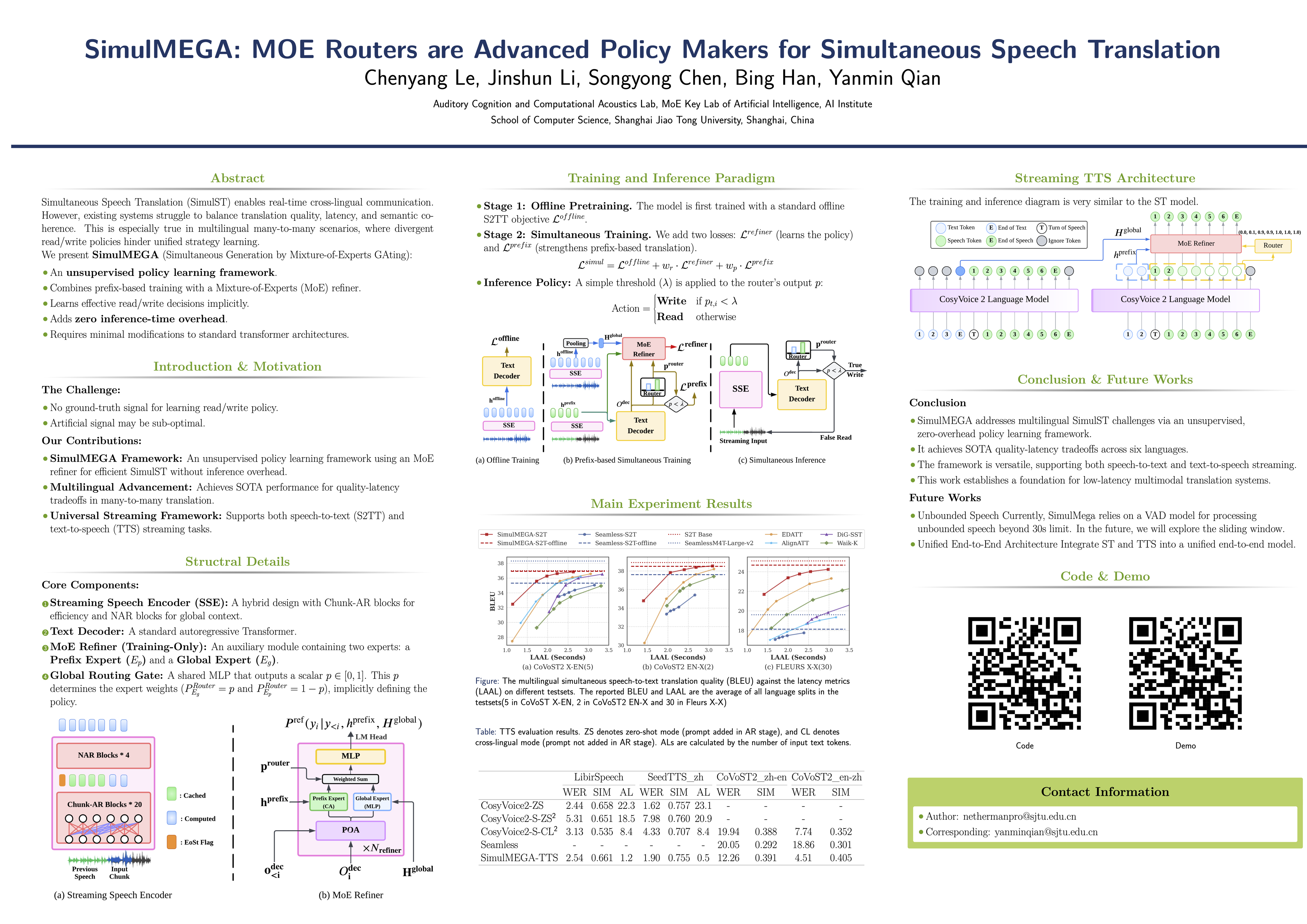

Simultaneous Speech Translation (SimulST) enables real-time cross-lingual communication by jointly optimizing speech recognition and machine translation under strict latency constraints. Existing systems struggle to balance translation quality, latency, and semantic coherence, particularly in multilingual many-to-many scenarios where divergent read/write policies hinder unified strategy learning.

In this paper, we present SimulMEGA(Simultaneous Generation by Mixture-of-Experts GAting), an unsupervised policy learning framework that combines prefix-based training with a Mixture-of-Experts refiner to learn effective read/write decisions in an implicit manner, without adding inference-time overhead. Our design requires only minimal modifications to standard transformer architectures and generalizes across both speech-to-text and text-to-speech streaming tasks.

Through comprehensive evaluation on six language pairs, our 500 M-parameter speech-to-text model outperforms the Seamless baseline, achieving under 7% BLEU degradation at 1.5 s average lag and under 3% at 3 s. We further demonstrate SimulMEGA’s versatility by extending it to streaming TTS via a unidirectional backbone, yielding superior latency–quality trade-offs.