Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#511

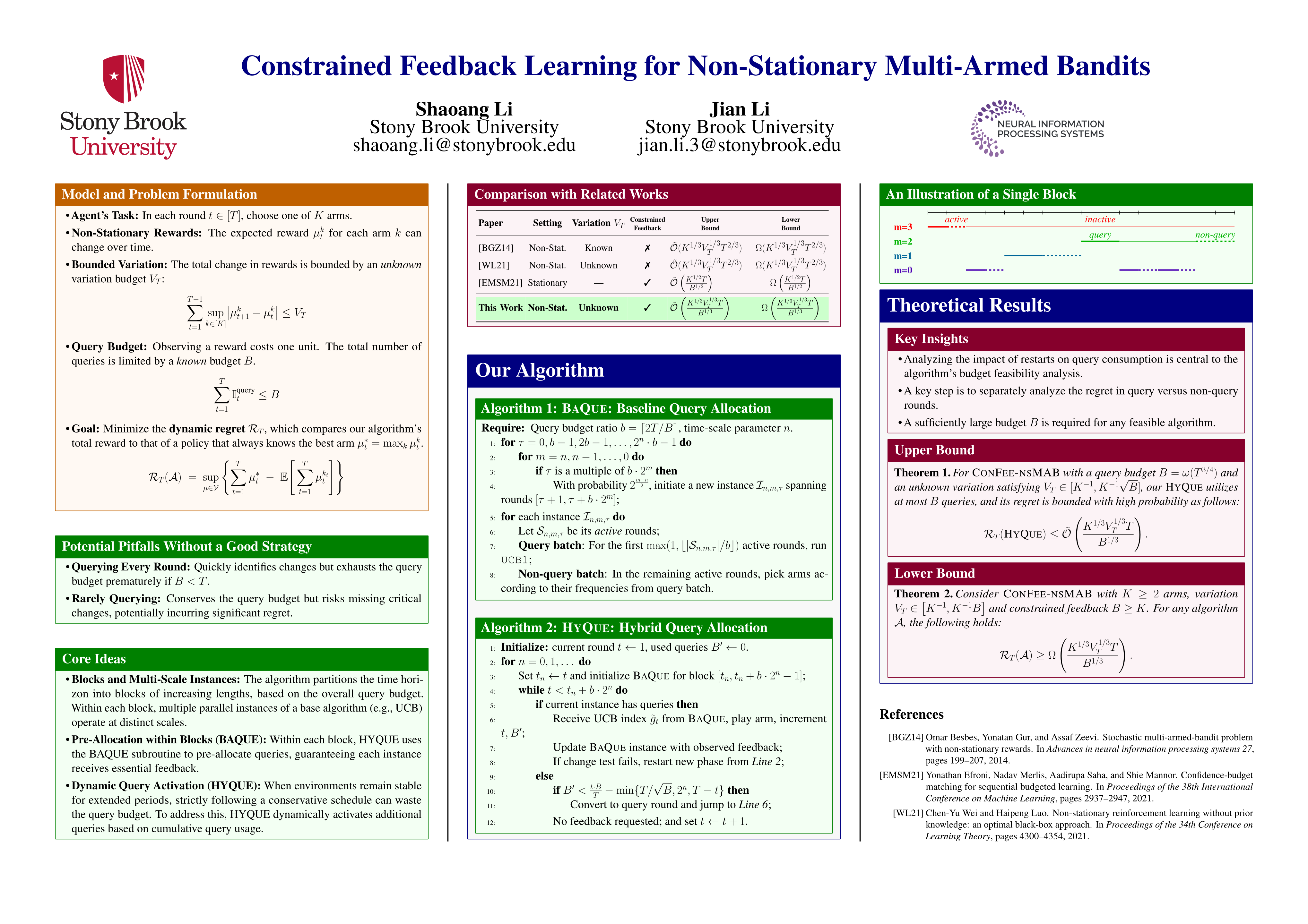

Constrained Feedback Learning for Non-Stationary Multi-Armed Bandits

Abstract

Non-stationary multi-armed bandits (nsMAB) enable agents to adapt to changing environments by incorporating mechanisms to detect and respond to shifts in reward distributions, making them well-suited for dynamic settings. However, existing approaches typically assume that reward feedback is available at every round—an assumption that overlooks many real-world scenarios where feedback is limited.

In this paper, we take a significant step forward by introducing a new model of constrained feedback in non-stationary multi-armed bandits (ConFee-nsMAB), where the availability of reward feedback is restricted. We propose the first prior-free algorithm—that is, one that does not require prior knowledge of the degree of non-stationarity—that achieves near-optimal dynamic regret in this setting.

Specifically, our algorithm attains a dynamic regret of , where is the number of rounds, is the number of arms, is the query budget, and is the variation budget capturing the degree of non-stationarity.