Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#1111

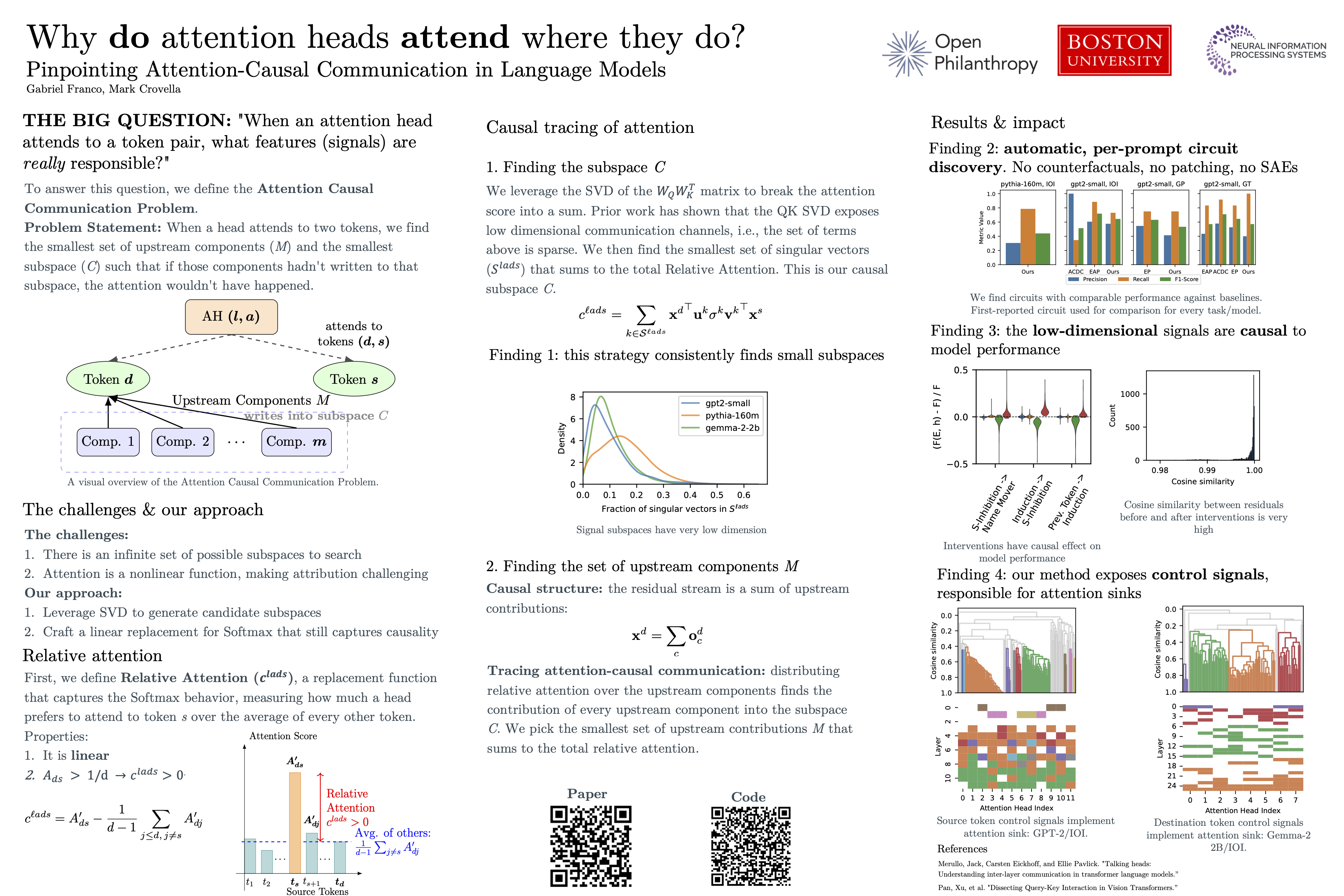

Pinpointing Attention-Causal Communication in Language Models

Abstract

The attention mechanism plays a central role in the computations performed by transformer-based models, and understanding the reasons why heads attend to specific tokens can aid in interpretability of language models. Although considerable work has shown that models construct low-dimensional feature representations, little work has explicitly tied low-dimensional features to the attention mechanism itself.

In this paper we work to bridge this gap by presenting methods for identifying attention-causal communication, meaning low-dimensional features that are written into and read from tokens, and that have a provable causal relationship to attention patterns. The starting point for our method is prior work 1-3 showing that model components make use of low dimensional communication channels that can be exposed by the singular vectors of QK matrices. Our contribution is to provide a rigorous and principled approach to finding those channels and isolating the attention-causal signals they contain.

We show that by identifying those signals, we can perform prompt-specific circuit discovery in a single forward pass. Further, we show that signals can uncover unexplored mechanisms at work in the model, including a surprising degree of global coordination across attention heads.