Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#4106

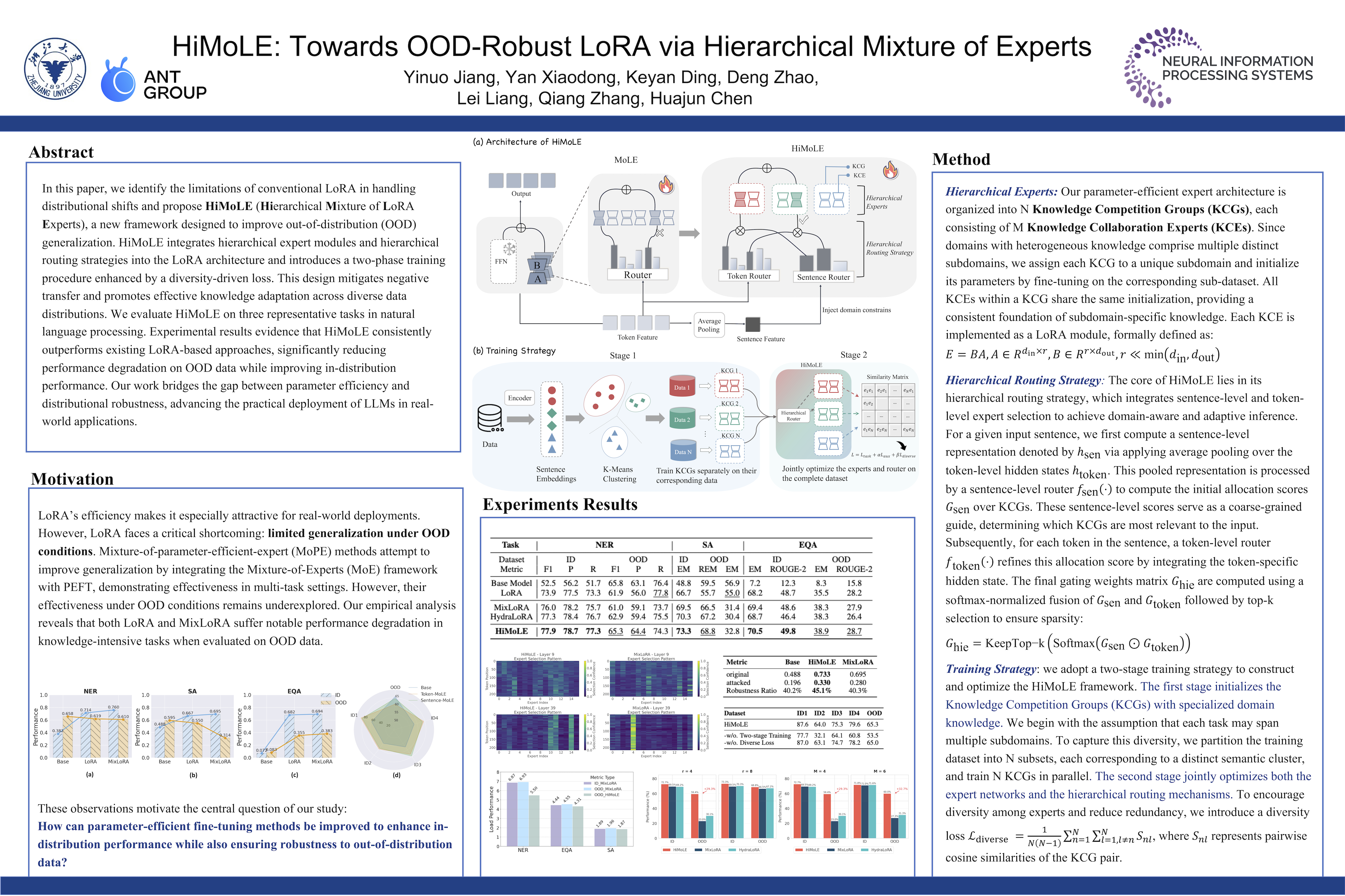

HiMoLE: Towards OOD-Robust LoRA via Hierarchical Mixture of Experts

Abstract

Parameter-efficient fine-tuning (PEFT) methods, such as LoRA, have enabled the efficient adaptation of large language models (LLMs) by updating only a small subset of parameters. However, their robustness under out-of-distribution (OOD) conditions remains insufficiently studied.

In this paper, we identify the limitations of conventional LoRA in handling distributional shifts and propose HiMoLE(Hierarchical Mixture of LoRA Experts), a new framework designed to improve OOD generalization. HiMoLE integrates hierarchical expert modules and hierarchical routing strategies into the LoRA architecture and introduces a two-phase training procedure enhanced by a diversity-driven loss. This design mitigates negative transfer and promotes effective knowledge adaptation across diverse data distributions.

We evaluate HiMoLE on three representative tasks in natural language processing. Experimental results evidence that HiMoLE consistently outperforms existing LoRA-based approaches, significantly reducing performance degradation on OOD data while improving in-distribution performance. Our work bridges the gap between parameter efficiency and distributional robustness, advancing the practical deployment of LLMs in real-world applications.