Poster Session 1 · Wednesday, December 3, 2025 11:00 AM → 2:00 PM

#3103

Efficiently Maintaining the Multilingual Capacity of MCLIP in Downstream Cross-Modal Retrieval Tasks

Abstract

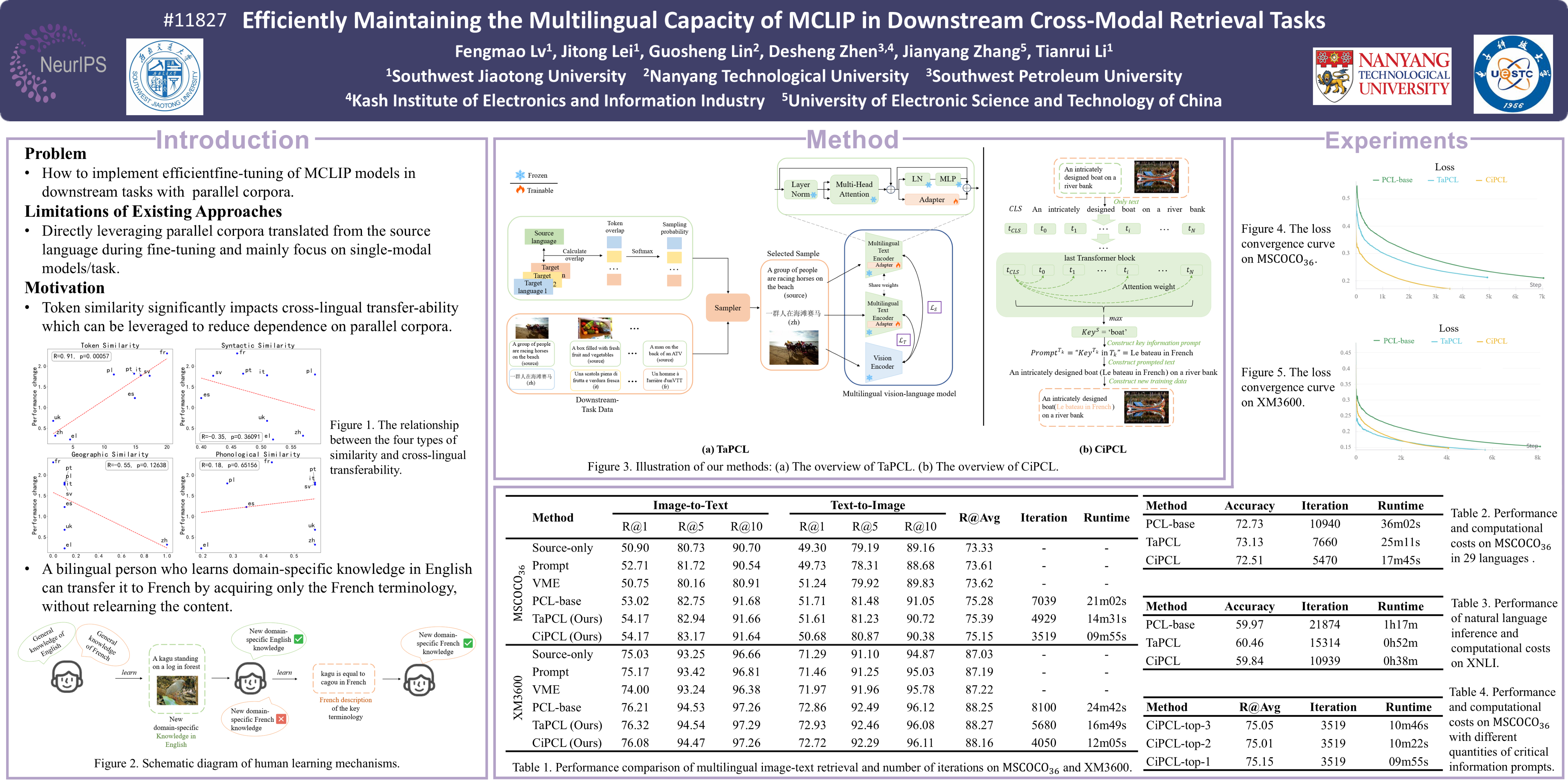

While existing research on Multilingual CLIP (MCLIP) has prioritized model architecture design, our work uncovers a critical challenge in practical adaptation: fine-tuning MCLIP through a single source language risks diminishing its multilingual capabilities in downstream tasks due to cross-linguistic disparities. To bridge this gap, we systematically investigate the role of token similarity in cross-lingual transferability for image-text retrieval, establishing it as a key factor governing fine-tuning efficacy.

Building on this insight, we propose two novel strategies to enhance efficiency while preserving multilinguality:

- TaPCL dynamically optimizes training by prioritizing linguistically distant language pairs during corpus sampling, reducing redundant computation, and

- CiPCL enriches the source corpus with multilingual key terms, enabling targeted knowledge transfer without reliance on exhaustive parallel data.

By strategically balancing token similarity and domain-critical information, our methods significantly lower computational costs and mitigate over-dependence on parallel corpora. Experimental evaluations across diverse datasets validate the effectiveness and scalability of our framework, demonstrating robust multilingual retention across languages.

This work provides a principled pathway for adapting MCLIP to real-world scenarios, where computational efficiency and cross-lingual robustness are paramount. Our codes are available at https://github.com/tiggers23/TaPCL-CiPCL.