Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#2713

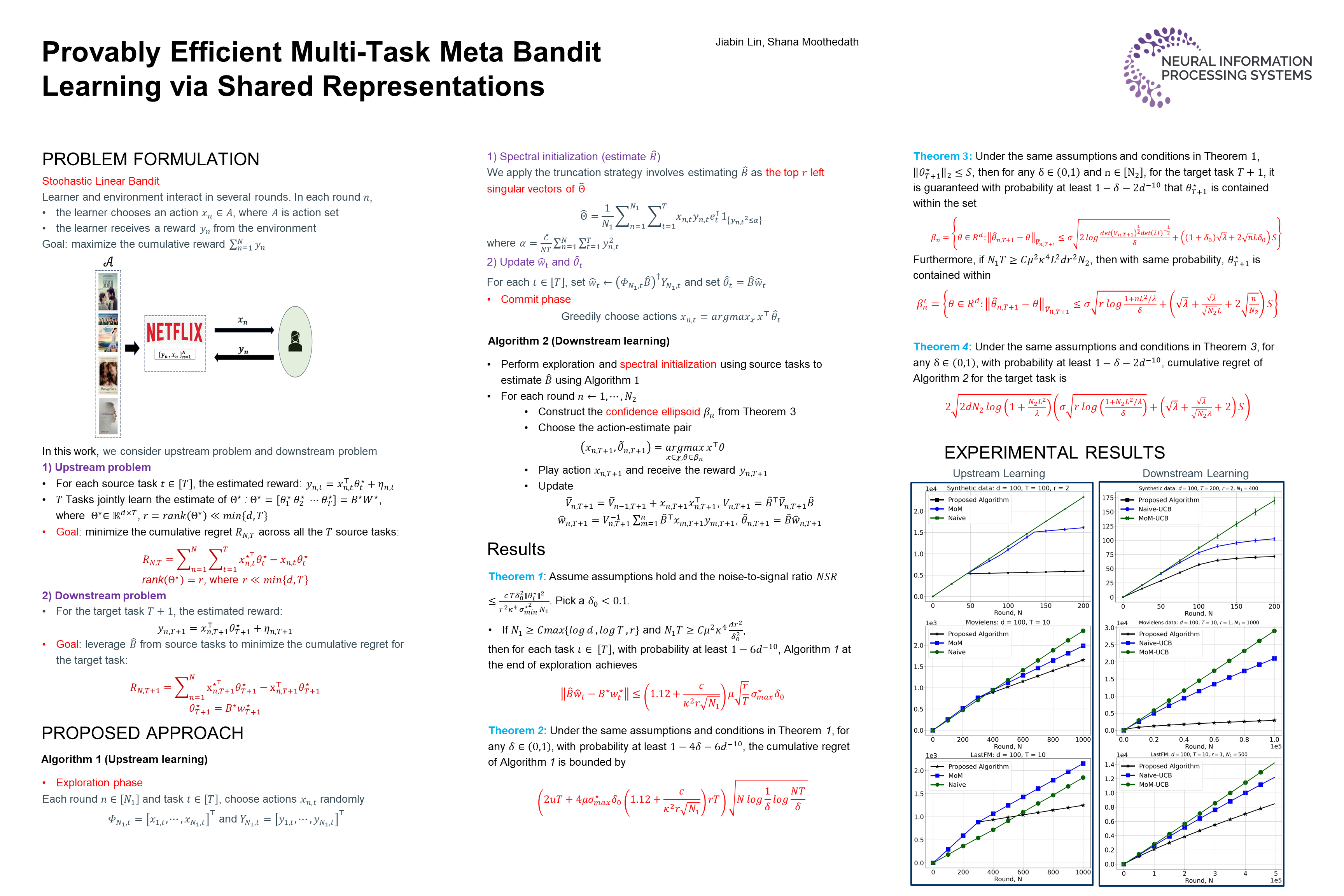

Provably Efficient Multi-Task Meta Bandit Learning via Shared Representations

Abstract

Learning-to-learn or meta-learning focuses on developing algorithms that leverage prior experience to quickly acquire new skills or adapt to novel environments. A crucial component of meta-learning is representation learning, which aims to construct data representations capable of transferring knowledge across multiple tasks—a critical advantage in data-scarce settings.

We study how representation learning can improve the efficiency of bandit problems. We consider -dimensional linear bandits that share a common low-dimensional linear representation.

We provide provably fast, sample-efficient algorithms to address the two key problems in meta-learning:

- learning a common set of features from multiple related bandit tasks

- transferring this knowledge to new, unseen bandit tasks.

We validated the theoretical results through numerical experiments using real-world and synthetic datasets, comparing them against benchmark algorithms.